At Sicché, we are researchers and consultants first. We know that analyzing qualitative data—whether from communities, diaries, or pre-tasks—is a fascinating but time-consuming challenge.

Today, "feeding" data into an Artificial Intelligence (be it your team's preferred model or the one mandated by corporate policy) has become standard practice to speed up synthesis and uncover hidden patterns.

However, as experts who regularly use both our own platform and competitor tools, we’ve realized that the bridge between data collection and uploading to an external LLM is often the real bottleneck.

Sicché features its own internal AI designed for immediacy. It’s a simple, intentional tool meant for getting a quick overview of results, testing variables, or chatting with responses from specific activities.

But we are realistic: our core job is providing a top-tier research platform, not universal language modeling. For massive analysis or cross-project synthesis, it is natural to turn to giants like ChatGPT, Gemini, or Copilot. This is exactly where our latest development idea was born.

Using various global platforms like Recollective, Incling, Tellet or Indeemo alongside our own, we noticed a paradox: while the industry moves toward AI, exports are stuck in the 90s 😅.

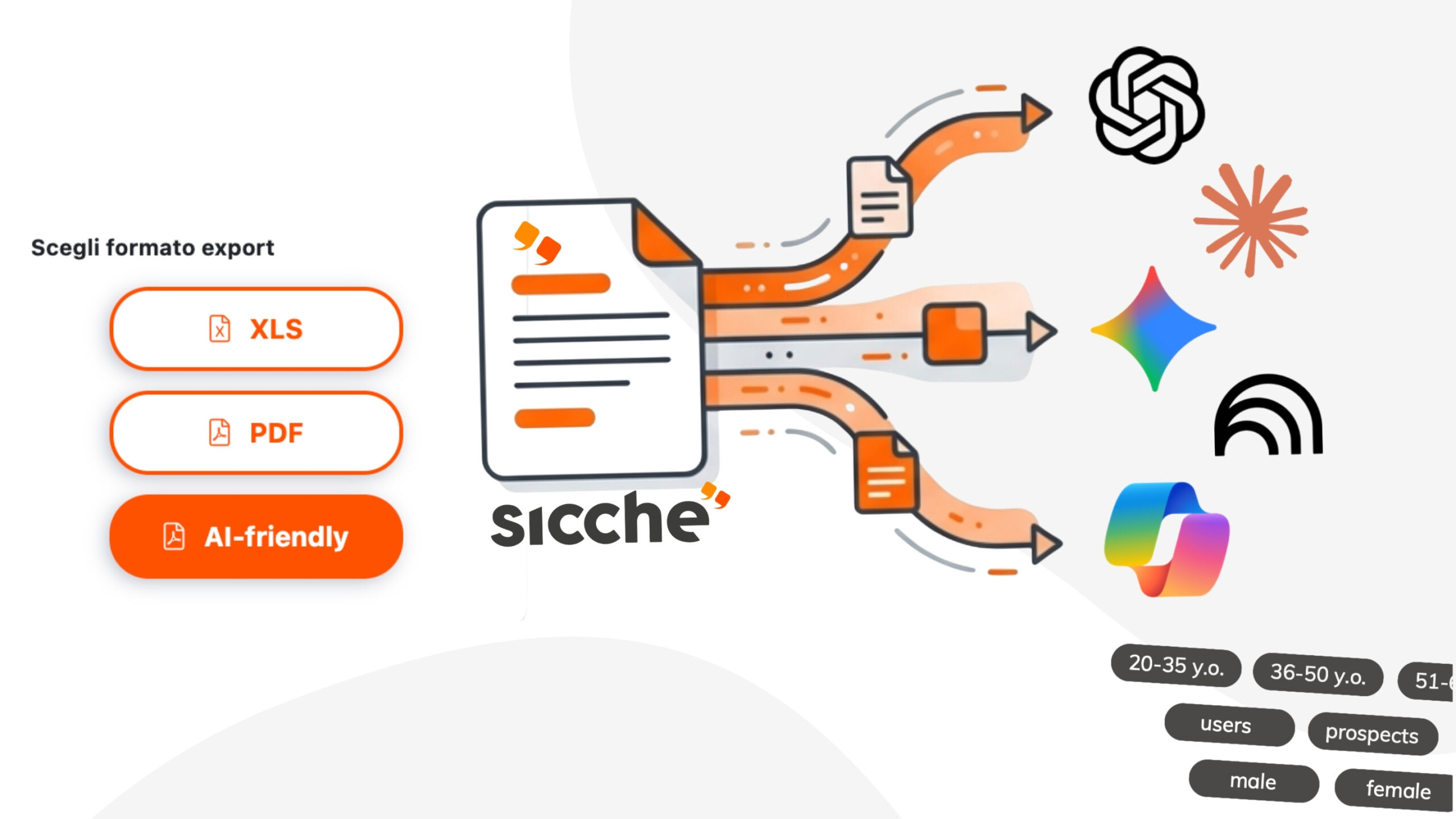

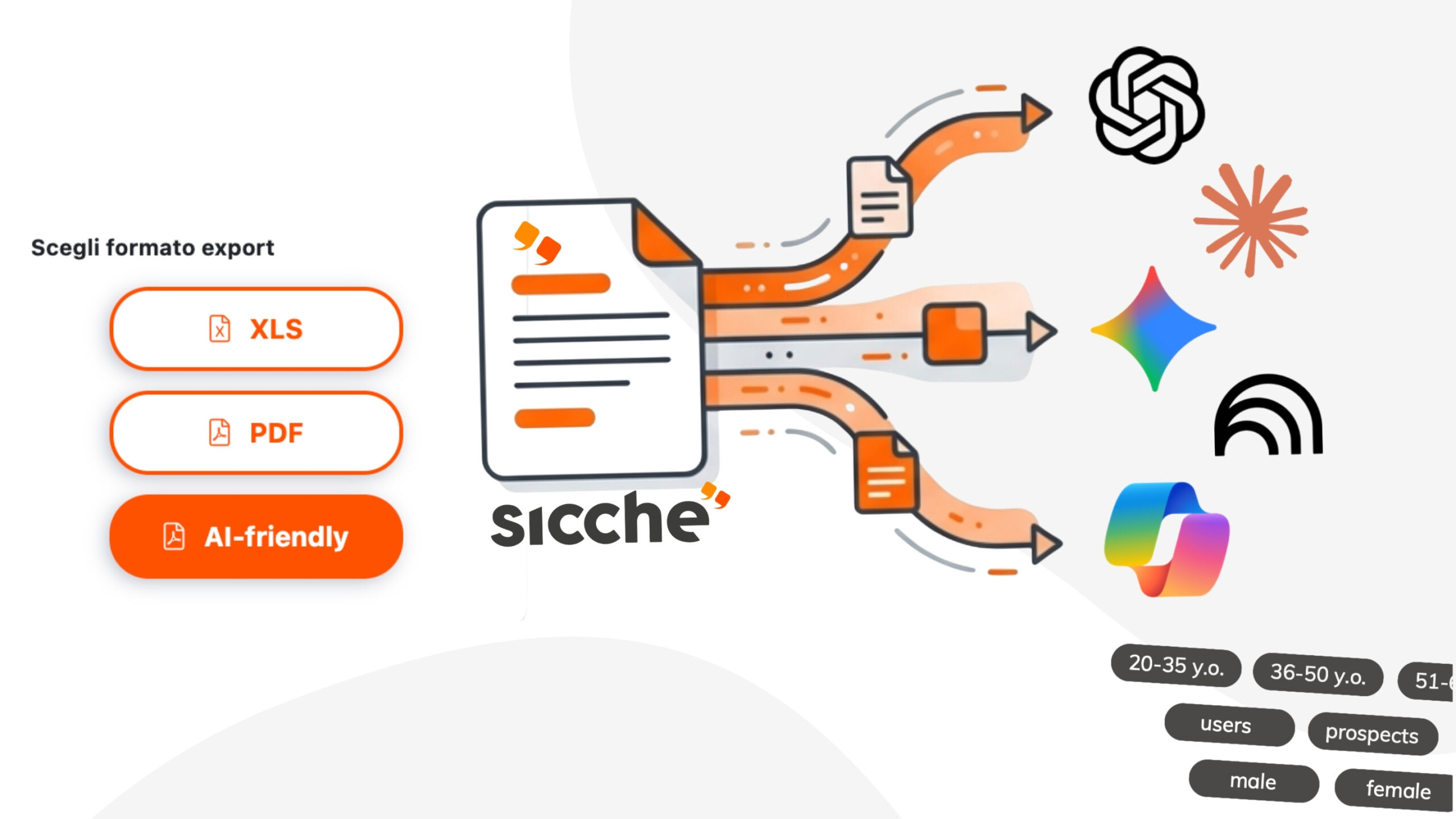

Word, Excel, and PDF files are designed for human eyes, not for the token limits of an LLM. Working closely with our development team, we reimagined the export process based on five pillars:

We are excited to announce that our new "AI-friendly" export format is now live on the platform.

We have rigorously tested it against major LLM models, noting that data analysis becomes significantly faster and more accurate compared to traditional formats.

Built by researchers for researchers, this tool removes the "noise" so you can focus entirely on the quality of your insights.

Try it out on your next project and let us know what you think!